A/B Testing and Optimization Checklist to Win Local AI Answers with FAQ Snippets on Lovable Sites

A guide covering a/B testing and optimization checklist to win local AI answers with FAQ snippets on Lovable sites, including hypotheses, metrics, and reporting schema.

TL;DR

- Run focused a/b test faq snippets per city to measure AI-answer inclusion rate and CTR improvements.

- Test phrase-level and field-level GEO signals, plus structured data and snippet length variations.

- Measure wins with manual SERP checks, automated scraping, and Google Search Console signals.

- Roll winners into Lovable templates with server-side variants or LovableSEO publish rules for scale.

Introduction: This checklist helps you optimize faq snippets local ai answers on Lovable sites. You’ll get practical hypothesis examples, test design guidance, metric definitions (including a quotable AI-answer statistic template), implementation options on Lovable, measurement approaches, and a reusable reporting schema you can copy into dashboards.

Why A/B testing FAQ snippets increases chances of AI-answer inclusion

"Without targeted experiments, you can’t determine which FAQ phrasing an AI system prefers. A/B testing isolates variables, allowing you to pinpoint the exact snippet variants that the search engine or AI assistant selects as answers. For instance, a question phrased "Do you offer emergency plumbing in [city]?" might trigger local AI answers when the city token is included; the same answer text without a city may not. This highlights the importance of scaling localized FAQ hubs to optimize AI responses effectively."

Practical benefit: testing shows whether shorter, direct-answer snippets win AI selection or whether longer, context-rich answers perform better for local queries. This is central to optimize faq snippets local ai answers because AI-answer systems favor clarity, locality, and coverage of intent. For more on this, see Programmatic faq hubs.

Testing one variable at a time prevents false positives and reveals actionable changes you can scale across locations. Track AI-answer inclusion rate by city to identify local content winners — a +X% increase in locale-specific FAQ phrasing often correlates with higher SERP feature ownership.

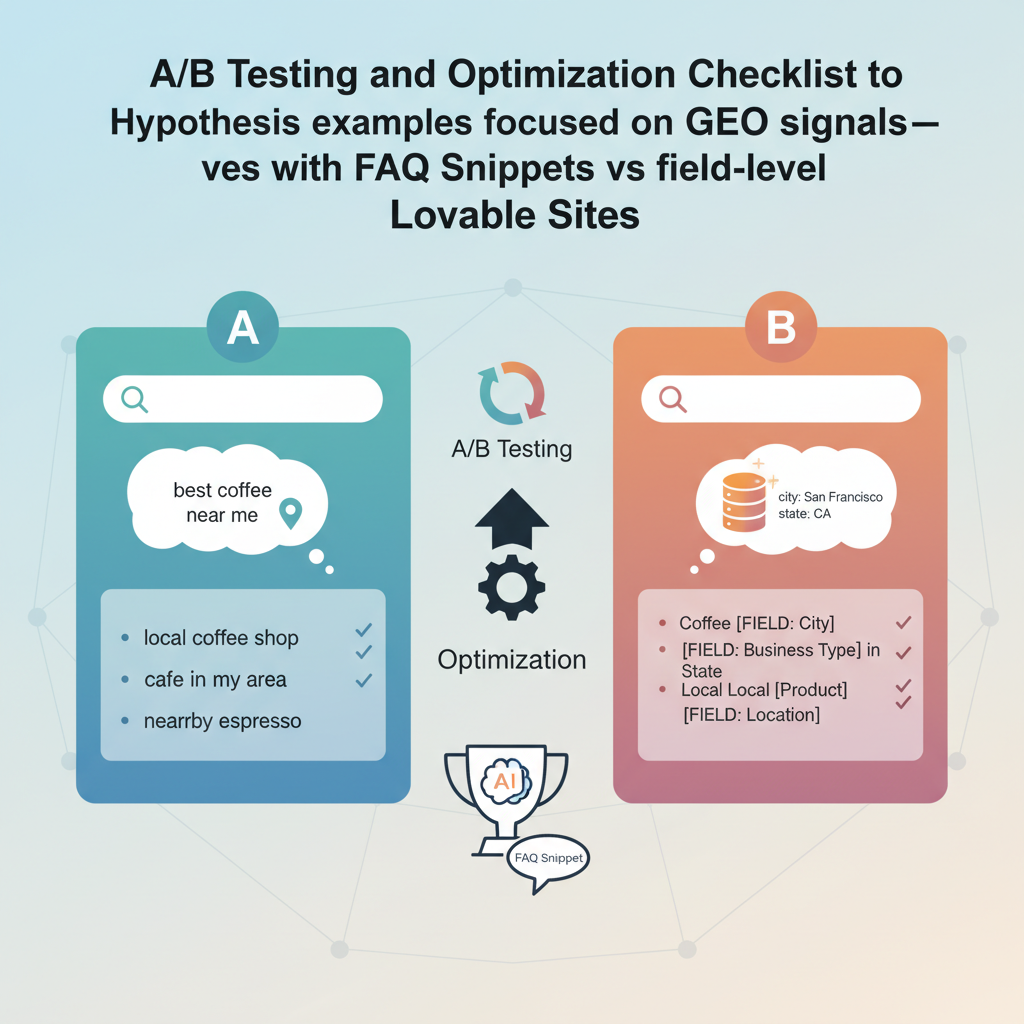

Hypothesis examples focused on GEO signals (phrase-level vs field-level)

Form clear, testable hypotheses. Phrase-level GEO hypothesis: "Including the metro name inside the question (phrase-level) increases AI-answer inclusion rate by 8–12% for local intent queries compared with generic questions." Field-level GEO hypothesis: "Keeping the city in a structured field (page metadata or schema) but not in the user-facing question will perform worse than phrase-level tokens for AI answers on non-brand local queries."

Examples you can implement on Lovable: create variation A that uses "Do you serve downtown Chicago?" in the FAQ question and variation B that uses "Do you serve our area?" while placing "Chicago" in a hidden structured-data field via Lovable's LovableSEO publish rules. Track results separately by metro and postal code.

Phrase-level location tokens in visible questions often outperform hidden fields for immediate AI snippet selection in local queries.

Designing an A/B test for FAQ pages on Lovable (metrics, sample size, duration)

Design tests to detect changes in AI-answer inclusion and conversion. Metrics: impressions, clicks, AI-snippet-flag, CTR, organic conversions, and bounce rate. Sample size: target a minimum of 1,000 impressions per variation per location to detect moderate uplifts; if impressions are lower, aggregate similar metros or extend the test period. Duration: run tests at least 4 weeks to smooth weekly traffic cycles; extend to 8–12 weeks for low-volume locations.

Concrete thresholds: aim for a detectable CTR delta of 0.5–1.0 percentage point with power 0.8; if you can’t reach those sample sizes, use multi-location pooling and report by cluster (e.g., metros with similar search volume).

Primary metrics: impressions, clicks, AI-snippet inclusion, organic conversions

Define each metric precisely. Impressions = number of SERP impressions tracked for the target queries. Clicks = organic clicks to the tested page. AI-snippet inclusion = binary flag per query indicating whether the AI assistant or featured snippet used your content. Organic conversions = tracked goal completions from organic sessions. CTR = clicks / impressions.

Example KPIs: increase AI-snippet inclusion rate by +5% in target metros, improve CTR by +2 percentage points, and lift organic conversions by +3% from pages that win AI snippets. Use Google Search Console to validate impressions and clicks and supplement with automated SERP capture for AI-snippet identification.

Variations to test: snippet length, question phrasing, explicit location tokens, structured data markup

Build a matrix of variations: short answer (1–2 sentences) vs long answer (3–4 sentences); direct phrasing vs conversational phrasing; question including exact city name vs generic phrasing with city in metadata; JSON-LD FAQ schema present vs absent. Prioritize tests that change one variable at a time.

Example plan: run a 2x2 test where axis A toggles visible city tokens (yes/no) and axis B toggles schema markup (JSON-LD present/absent). This helps isolate whether AI systems prefer visible locality or structured signals.

Include the secondary tactic a/b test faq snippets in headings and test notes to ensure search teams and engineers track experiment purpose and variants.

Implementation methods on Lovable (server-side variant, LovableSEO publish rules, or query-parameter testing)

Lovable supports three practical variant delivery methods: server-side variant (A/B routing on first request), LovableSEO publish rules (content-level changes published by rules for select pages), and query-parameter testing for client-side experiments. Use server-side variants when you need consistent canonical outputs; use LovableSEO publish rules to create durable variations across many pages; use query-parameter testing for rapid, low-risk validation.

Example: use LovableSEO publish rules to inject JSON-LD FAQ schema with city fields for half your location pages, and a server-side variant to present the phrase-level city token on visible FAQ questions for the other half. Track each method separately to compare delivery impact on ai answer optimization faq lovable efforts.

Measuring AI-answer wins: manual SERP checks, automated SERP scraping, and GSC signals

Combine three measurement inputs. Manual SERP checks validate the experience and capture nuance. Automated SERP scraping records whether an AI answer or featured snippet includes your text (use polite scraping and rotate user agents). Google Search Console provides impressions and clicks but won’t label AI-snippet inclusion reliably, so pair it with scraping or SERP screenshots. Log every observation with timestamp, query, location, and variation.

Sample reporting table schema (copy into your dashboard):

| city | variation | impressions | ai-snippet-flag | CTR | conversions |

|---|---|---|---|---|---|

| Metro name | A / B | numeric | 0/1 | percent | numeric |

Interpreting results and rolling winners into templates

Treat winners as templates, not one-off copy. If a variant with phrase-level city tokens and concise answers wins consistently across 6+ metros, roll that pattern into page templates using LovableSEO rules. Document decision rules: only roll variants that improve AI-snippet inclusion rate and CTR without harming conversions. Example decision rule: promote a variant when AI-snippet inclusion rate increases by >=3% and CTR increases by >=1 percentage point across three similar metros.

Common pitfalls and how to avoid negative SEO impacts

Avoid these mistakes: (1) Changing too many variables at once, which produces ambiguous results; (2) Over-optimizing by repeating city tokens unnaturally, which hurts readability; (3) Removing canonical tags when running server-side variants, which can fragment indexing. Always keep canonical and structured-data consistent across variants and use rel=canonical when necessary.

Concrete safeguard: run a pre-launch checklist that confirms canonical tags, schema validity, and mobile rendering before promoting any winner to production.

Example case study: hypothetical test with results and recommended next steps

Hypothetical: A regional home-services site tested two FAQ variants across 10 metros. Variation A used phrase-level city tokens in questions and concise answers; Variation B used generic questions but had the city in JSON-LD. After 8 weeks, Variation A showed +6% AI-snippet inclusion rate, +1.8pp CTR, and +4% conversions. Recommended next steps: roll Variation A into similar high-volume metros using LovableSEO publish rules, and re-run a confirmatory test in lower-volume postal-code clusters.

Checklist for continuous optimization and scaling across locations

Copy this checklist when scaling tests:

- Define test objective and single-variable hypothesis.

- Select 1–3 target metros or postal-code clusters.

- Ensure minimum sample size or plan for pooled analysis.

- Instrument AI-snippet-flag via automated SERP capture.

- Validate schema and canonical consistency.

- Promote winners via LovableSEO rules or server-side templates only after meeting decision rules.

FAQ

What is a/b testing and optimization checklist to win local ai answers with faq snippets on lovable sites?

An A/B testing and optimization checklist on Lovable is a step-by-step testing plan that compares FAQ snippet variants across locations to increase AI-answer inclusion rate and organic conversions by measuring impressions, clicks, AI-snippet presence, CTR, and conversions.

How does a/b testing and optimization checklist to win local ai answers with faq snippets on lovable sites work?

The checklist defines hypotheses, selects GEO segmentation, implements controlled variants via Lovable methods (server-side variant, LovableSEO publish rules, query-parameter testing), collects measurements from SERP checks and GSC, and promotes winning templates when predefined thresholds are met.

Ready to Rank Your Lovable App?

This article was automatically published using LovableSEO. Get your Lovable website ranking on Google with AI-powered SEO content.

Get Started