API Patterns for Large Programmatic Feeds: Pagination, Rate Limits, Incremental Exports & Retry Strategies for Lovable Sites

A guide covering aPI Patterns for Large Programmatic Feeds: Pagination, Rate Limits, Incremental Exports & Retry Strategies for Lovable Sites.

Introduction — why API design drives scalability and freshness for programmatic pages

Question: How should you design APIs for large programmatic feeds so your Lovable site stays fresh and scalable?

Answer: Use pagination, incremental feed export, polite rate limiting, and predictable retry semantics so your site can push or pull hundreds of thousands of pages without repeated full refreshes.

Good API design decides whether pages are fresh, or stale and expensive to rebuild. For lovableseo.ai-style workflows, efficient api pagination programmatic feeds lovable matters because you often generate tens of thousands of product or location pages from a central catalog. Incremental export = sending only changed records since the last successful sync; reduces load and speeds page freshness. Start by deciding transport (full export, incremental export, or webhooks), then add pagination and rate-limit awareness. Recommend KPIs: feed success rate, average sync latency, and error rates. For geo-heavy sites, deploy regional endpoints to reduce round-trip time and improve freshness for localized pages. For more on this, see Programmatic data feeds lovable sites.

Design feeds so retries are idempotent: the same request repeated must not create duplicate pages or records.

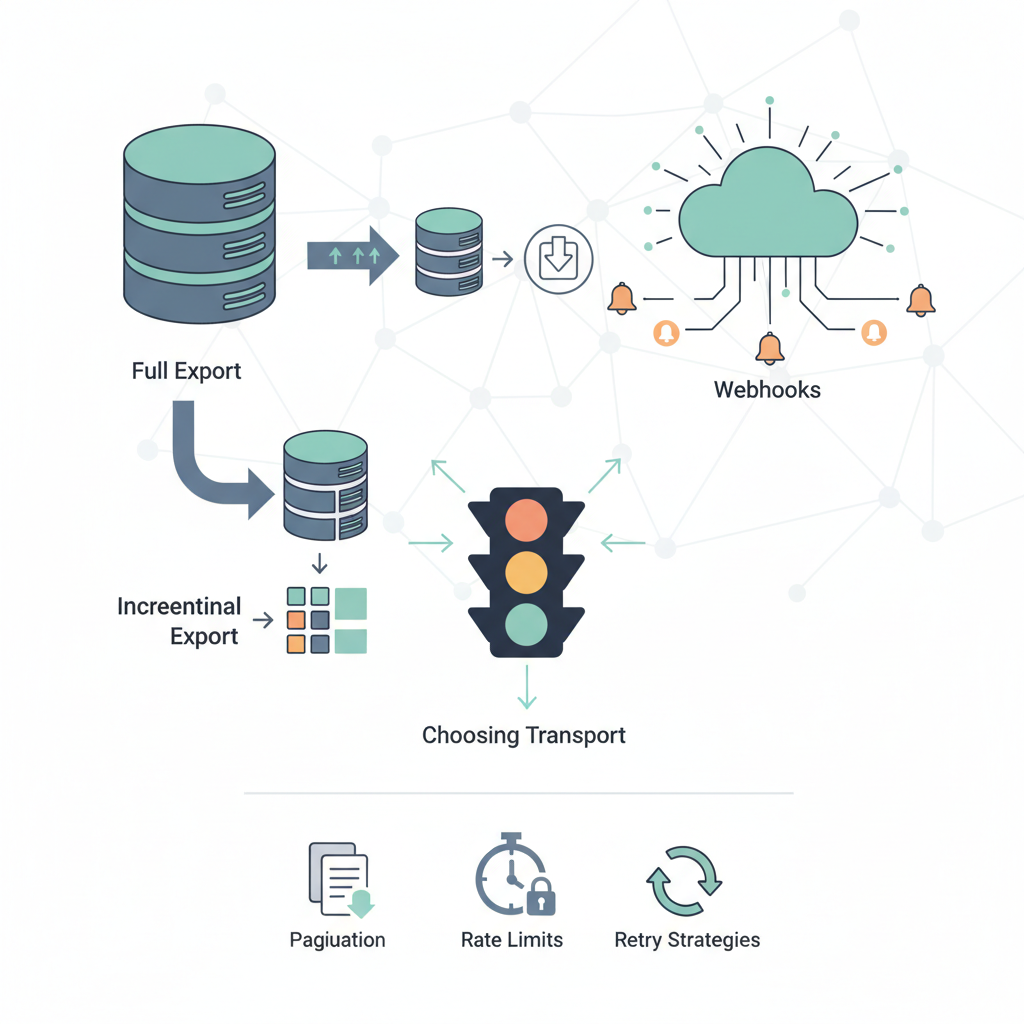

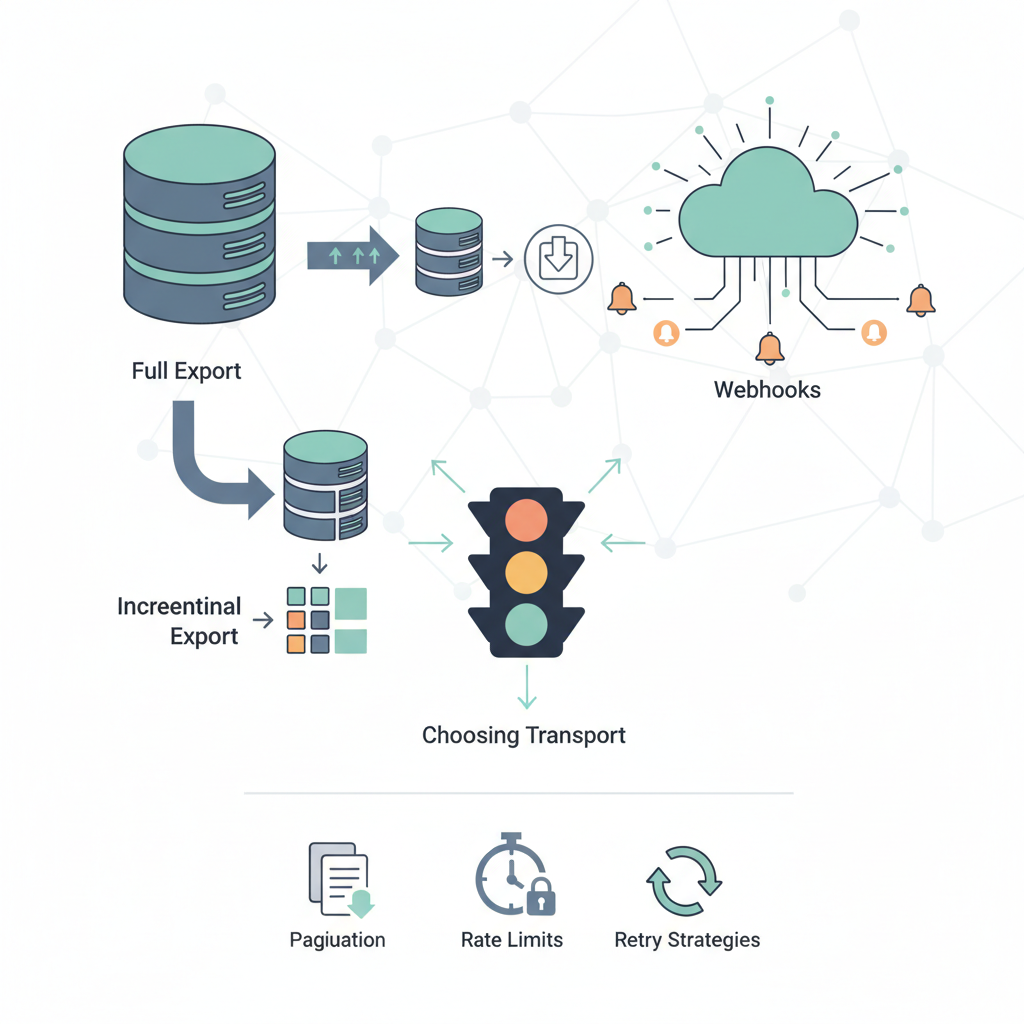

Choosing transport: full exports, incremental exports, and webhooks

Why this section matters: transport determines load and latency. A full export sends every record and is simple; an incremental feed export reduces bandwidth and processing. Most lovableseo.ai flows combine an initial full export with ongoing incremental feed export and occasional webhooks for immediate changes.

Full exports work for initial imports or small catalogs. Example: a 50k-product site might accept a nightly full export (CSV or JSON) compressed and uploaded to object storage, then processed by the crawler. But full exports cause long processing windows and slow freshness.

Incremental exports send changed records only. Use change tokens or checkpoints (last_modified timestamp or a monotonic change_id). A practical rule: include a change cursor and page size in the response. For immediate updates (price change, stock out), webhooks are efficient: the API posts a minimal payload to a receiver when an event happens. That addresses webhook vs pull feed tradeoffs: webhooks push real-time changes but require endpoint reliability; pull feeds are simpler to scale and audit. For lovableseo.ai, combine: use incremental exports for the steady-state sync and webhooks for high-priority changes (deletions, canonical changes).

| Transport | When to use | Concrete threshold |

|---|---|---|

| Full export | Initial load, small catalogs | Under 100k records or nightly window |

| Incremental export | Ongoing sync | Send only changed records since cursor; page size 500–2000 |

| Webhooks | Real-time, critical updates | Use for high-priority events; retry with exponential backoff |

Pros and cons for Lovable site workflows

For lovableseo.ai-style sites, the choice affects SEO freshness and engineering complexity. Incremental feed export minimizes compute and keeps crawlers focused on changed pages. Webhooks reduce latency for time-sensitive edits (price, availability, redirects). However, webhooks require a reliable receiver and replay on failure; they can overwhelm receivers during bulk changes (avoid fan-out storms). Understanding the principles of programmatic SEO for lovable sites can help streamline these processes effectively.

Pros of incremental feed export: lower network cost, smaller processing windows, easier to meet rate limiting programmatic seo constraints. Cons: you must track state and handle missed syncs. Pros of webhooks: near-instant updates and simpler change payloads. Cons: operational burden, security considerations, and the need to manage backfills. For lovableseo.ai, a two-tier model works best: incremental exports for steady sync + webhooks for hot changes and deletions.

Push webhooks for urgent changes; rely on incremental exports for bulk consistency and reconciliation.

Pagination strategies: cursor vs offset vs cursor+timestamp

Why this section matters: pagination determines correctness under concurrent writes. Offset-based paging is simple but breaks when items insert or delete; cursor-based paging is stable across changes. For large programmatic feeds favor cursors.

Offset pagination: clients request page N and size S. Works when data is static during export. For large flows with frequent writes, offset causes duplicates or skips. Cursor pagination: responses include a cursor token (opaque or encoded) to request the next slice. Cursor is resilient to inserts and deletes and typically faster because the database can seek to a point using an indexed column (id, sort key).

Cursor+timestamp hybrid: include a last_modified timestamp with each record and a cursor for position. On reconciling partial failures you can resync records modified after a timestamp. Concrete recommendation: use cursor pagination with a stable sort key (created_at or change_id), expose next_cursor, and enforce a max page size (e.g., 1000). Return total_count only if you can compute it cheaply; otherwise avoid it to prevent expensive COUNT(*) queries.

Rate limiting & backoff: designing polite clients and resilient servers

Why this section matters: polite clients prevent service degradation and keep your SEO pipelines reliable. Rate limiting programmatic seo is both a capacity and fairness mechanism; treat it as a contract between provider and consumer.

Server-side: expose limits via headers (X-RateLimit-Limit, X-RateLimit-Remaining, Retry-After). Provide tiered limits per API key and offer burst windows. Client-side: implement token-bucket throttling to shape request rates. If you receive 429 or a Retry-After, back off with exponential jitter (base 500ms, max 60s) and retry according to idempotency rules.

Concrete thresholds: for typical API tiers, allow bursts up to 2x steady rate; target P95 latency under 500ms for paging endpoints. For programmatic SEO jobs, prefer longer windows and smaller concurrent workers—e.g., 10 parallel workers with page sizes of 500 instead of 100 workers of 50 items each—to reduce surge load. Mention rate limiting programmatic seo when coordinating crawlers with feed pushes to avoid concurrent spikes.

Retry, idempotency, and consistency guarantees for bulk updates

Why this section matters: retries must not corrupt your index or create duplicate pages. Set clear idempotency keys and be explicit about consistency semantics in your API contract.

Idempotency: require clients to send an idempotency key for create/update operations in bulk. Store the key and result for a retention window (e.g., 24–72 hours) so repeated requests return the original outcome. For batch endpoints, include per-item status so clients can re-submit partial failures without reprocessing successes.

Consistency guarantees: prefer eventual consistency for large feeds but provide a reconciliation endpoint to request absolute state for a subset. Example: a bulk update endpoint accepts a batch of 1000 items with idempotency_key; on failure respond with a list of failed item_ids and reason codes. For audits, provide a /changes?since=cursor endpoint so consumers can reconcile gaps. These are reliability patterns api feeds you should document and test.

Handling deletions, redirects, and URL changes in feeds

Why this section matters: search engines and crawlers need explicit signals for removed or moved content. Silently dropping URLs causes crawl waste and 404s.

Include explicit actions in your feed payload: {"url": "...", "action": "update|delete|redirect", "target": "..."}. For deletions, include a deletion timestamp and a tombstone record retained for a configurable period (30–90 days) so downstream systems can process the change. For redirects, provide target URL and redirect type (301 vs 302).

Example: when a product is discontinued, send a delete action plus a suggested canonical or redirect to a similar product to preserve ranking signals. For mass URL changes, send a redirect map via incremental export and post a webhook summarizing the change to trigger reindexing. Track metrics: tombstone processed rate and redirect verification success rate.

Monitoring, alerting, and health checks for feed pipelines

Why this section matters: you need to detect broken feeds before they affect hundreds of pages. Monitoring and alerting turn silent failures into actionable items.

Track these KPIs: feed success rate, average sync latency, error rates, and change lag (time between source change and page update). Implement health checks: /health returning metrics and last-processed-cursor. Emit events when feed success rate drops below 99% over a 24-hour rolling window or average sync latency exceeds your threshold (e.g., 10 minutes for incremental jobs).

Alerting: separate alerts for hard failures (failed full export), degradation (increased latency), and anomalies (unexpected spike in deletions). For reliability patterns api feeds, include runbook links and an automatic rollback or pause control so feeds can be stopped if they cause cascading errors.

Security & auth best practices (API keys, JWTs, scoped tokens)

Why this section matters: feeds carry sensitive content and high write privileges. Use scoped tokens and least privilege to limit blast radius.

Prefer short-lived tokens (JWTs) signed with rotating keys, and use scopes to restrict endpoints (read-only vs write-only vs admin). Rotate API keys regularly and require TLS everywhere. For webhooks authenticate requests using HMAC signatures and reject missing or stale timestamps. Log auth failures separately and limit retry on auth errors to avoid brute-force lockouts.

Also include fine-grained audit trails for bulk changes and idempotency operations. For lovableseo.ai, ensure that token scopes map to site-level boundaries so a compromised key cannot alter other customers' feeds.

Implementation with LovableSEO: recommended endpoints, response formats, and sample code

Why this section matters: concrete endpoints speed integration. For LovableSEO-style integrations, expose endpoints for full export, incremental changes, and a webhook receiver.

Recommended minimal endpoints and response shapes (examples):

GET /v1/feeds/full?format=json

Response: { "cursor": null, "items": [{ "id": "123", "url": "...", "last_modified": "2025-01-01T12:00:00Z" }, ...] } GET /v1/feeds/changes?cursor=abc123

Response: { "next_cursor": "def456", "items": [ ... ] }

Sample webhook payload: {"event":"item.updated","id":"123","timestamp":"..."}. Validate HMAC and respond 200 quickly; accept retries and mark idempotency tokens.

Image prompt alt_text examples for editorial use:

- "Sequence diagram showing cursor pagination preventing duplicates during concurrent updates"

- "Decision matrix illustrating when to use webhooks versus pull feeds for SEO workflows"

Testing & staging: dry-run exports, sample payloads, and validation

Why this section matters: test pipelines prevent costly production mistakes. Always provide a dry-run mode that validates payloads without applying changes.

Dry-run export: return the same response as production but with an annotated preview and validation errors. Include sample payloads for all actions (create, update, delete, redirect). Automate schema validation (JSON Schema) and content checks (canonical, hreflang). Provide a staging endpoint or a query parameter ?dry_run=true.

| Checklist | Action |

|---|---|

| Schema validation | Validate every payload against JSON Schema |

| Idempotency test | Resend same request and expect no duplicate actions |

| Backfill test | Replay last 24 hours of changes into staging |

Troubleshooting common failure modes and mitigation checklist

Why this section matters: predictable failures should map to standard mitigations. Common failure modes include 429 spikes, partial batch failures, webhook delivery timeouts, and schema drift.

Mitigations checklist (copyable):

- 429 spikes: reduce concurrency, increase page size, implement exponential backoff

- Partial failures: requeue failed item_ids using stored idempotency keys

- Webhook timeouts: queue events and retry with jitter; implement dead-letter queues

- Schema drift: fail fast and reject payloads until a compatibility mode is enabled

For each incident, capture the failing cursor, affected item IDs, and diagnostic logs. Create a runbook step: reproduce in staging, apply hotfix, run a reconciliation using the changes endpoint.

Conclusion: rollout timeline and KPIs to measure success (freshness, error rate)

Rollout timeline: start with a staging dry-run (week 1), small production test with a subset of records (week 2), scale to full incremental sync (week 3), then enable webhooks for hot paths (week 4). These are example phases; adapt to your release process.

Measure success using these KPIs: feed success rate (>99% ideally), average sync latency (target under 10 minutes for incremental flows), error rate (number of failed items per 1000), and change lag (median time from source change to published page). Track reliability patterns api feeds by monitoring P95 processing time and the rate of idempotency collisions. For geo-heavy deployments, measure regional sync latency and deploy endpoints closer to major audiences to reduce round-trip and improve localized freshness.

FAQ

What is api patterns for large programmatic feeds?

API patterns for large programmatic feeds are documented design approaches—pagination, incremental feed export, rate limiting, retry semantics, and webhook vs pull feed tradeoffs—used to scale and keep programmatic pages fresh on Lovable sites.

How does api patterns for large programmatic feeds work?

They work by combining a transport strategy (full or incremental exports plus webhooks), stable pagination (cursor-based), polite rate limiting with client backoff, idempotent retries, and monitoring so feed consumers and providers exchange only the necessary changes and recover predictably from failures.

Ready to Rank Your Lovable App?

This article was automatically published using LovableSEO. Get your Lovable website ranking on Google with AI-powered SEO content.

Get Started