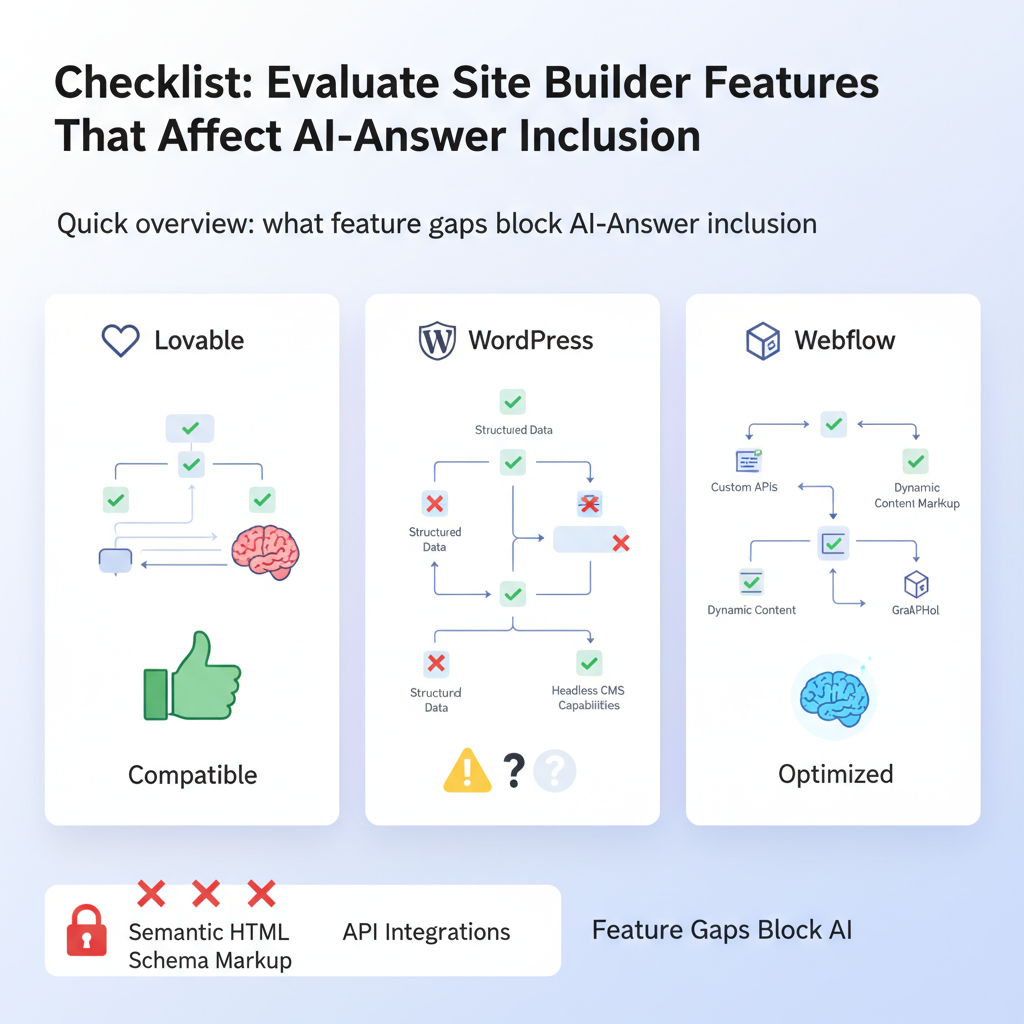

Checklist: Evaluate Site Builder Features That Affect AI-Answer Inclusion (Lovable vs WordPress vs Webflow)

A guide covering checklist: Evaluate Site Builder Features That Affect AI-Answer Inclusion (Lovable vs WordPress vs Webflow).

Quick overview: what feature gaps block AI-answer inclusion

If search engines and generative systems ignore your best pages, the underlying cause is usually site-level constraints, not just content quality. You might publish clear answers but the site builder strips schema, rewrites canonical links, or forces long lead paragraphs that confuse extraction. This article gives a practical site builder features ai answers checklist so you can evaluate site-level blockers and fix them fast.

Quick answer

Make sure your builder lets you: edit structured data, inject concise lead answers (≤60 words), control URLs/redirects, and publish programmatically at scale; then run repeatable ai snippet tests site builder probes across locales. For more on this, see Site builder ai answer seo.

Quotable: "AI-answer inclusion requires both machine-readable signals (schema, HTML structure) and human-facing clarity (concise lead answer ≤60 words)."

Editable structured data and FAQ/FAQ schema controls (test cases)

Structured data is the most deterministic signal for AI and search snippet extraction. If your builder prevents editing JSON-LD or automatically mangles FAQ schema, you lose the chance to appear in AI-generated answers. Test cases to run: add an explicit FAQPage JSON-LD block, an Answer object with a 40–60 word lead, and a simple HowTo step list. Then verify rendered HTML contains the JSON-LD unchanged.

Actionable tests:

- Add a minimal FAQPage JSON-LD to a page and check the response HTML for the intact script tag.

- Create a 50-word lead answer and confirm the builder doesn't move or truncate it into a modal or accordion by default.

- Place schema both inline (JSON-LD) and as microdata to measure extraction variance.

Run ai snippet tests site builder probes after each change. Prioritize queries with real GSC evidence, for example the sample probe 'loveable vs wordpress' (1,417 impressions) to justify prioritized testing. For more on this, see Best site builder for saas seo.

Content templates & programmatic publishing — why they matter for scale

When you publish hundreds or thousands of Q&A pages, manual edits fail. Templates that support editable meta blocks, lead-answer fields, and schema placeholders let you scale concise, extractable answers. If your builder lacks programmatic publishing APIs or CSV import of structured fields, you can't reliably produce the machine-readable signals AI systems need.

Concrete steps:

- Create a content template with discrete fields: question, lead answer (≤60 words), long answer, FAQ JSON-LD, canonical URL.

- Test a programmatic publish: import 50 rows with different locales and confirm each output preserves schema and lead answer position.

- Measure time-to-index for a sample batch to detect publishing or caching delays.

Quotable: "Templates that separate the lead answer and schema fields make programmatic publishing reliable for AI-answer inclusion."

Always store the concise answer as its own editable field; mixing it into a long page body reduces extraction reliability.

URL and redirect control: exact-match test scenarios

AI answer extractors prefer canonical, stable URLs that match the query intent. If a builder forces hashed URLs, appends session parameters, or converts your slug structure unpredictably, run exact-match tests to expose failures. Test scenarios include creating target URLs with and without trailing slashes, publishing the same content under multiple slugs, and enforcing 301s to the preferred canonical.

Exact-match test checklist:

- Publish page at /lovable-vs-wordpress and verify the final served URL exactly matches the slug you set.

- Create a duplicate page, set a 301 redirect, and confirm the server returns a single canonical header pointing to the preferred URL.

- Simulate query extraction by comparing the displayed lead answer at the canonical URL vs. the redirected URL.

Quotable: "Stable, exact-match URLs and single canonical headers reduce ambiguity for snippet extraction."

Redirects must return a single, server-side 301 with a canonical header; client-side redirects break many snippet pipelines.

Performance & Lighthouse thresholds to aim for (mobile-first)

AI systems and modern search both favor pages that load quickly on mobile. For typical content-heavy sites, target a Lighthouse performance score of 80+ and P95 Time to Interactive under 1.5s on simulated 4G throttling. If your builder injects large runtime libraries or defers critical content behind client JS, snippet extractors may only see placeholder text at crawl time.

Concrete thresholds:

- Largest Contentful Paint (mobile): <2.5s

- First Contentful Paint: <1.2s

- P95 time-to-interactive: <1500ms (on 4G emulation)

When you evaluate site builders, run Lighthouse emulation on a representative page with schema and lead answer present. Note if the builder inlines critical content or defers it behind JS frameworks.

Server response and crawlability checks (robots, sitemaps, canonical headers)

Crawlability failures are invisible until they cost you AI-answer inclusion. Confirm the builder exposes a standard sitemap.xml that you can edit or regenerate, and that robots.txt doesn't block snippet pages. Check server headers for proper canonical tags and that the builder does not inject X-Robots: noindex on templates or preview pages by default.

Actionable checklist:

- Fetch /robots.txt and validate no disallow directives for target paths.

- Verify sitemap entries include lastmod and reflect published pages exactly.

- Request pages and inspect canonical link tags and X-Robots headers for contradictions.

Snippet preview tests: how to simulate AI answer extraction

Reproducible snippet preview tests let you see exactly what an extractor would find. Use a simple test matrix that records country/locale, test URL, snippet length, schema presence, and time-to-index. Example matrix columns include: country, test_url, snippet_length_chars, schema_present (yes/no), and time_to_index_days. Run probes prioritized by GSC query data — for instance, include 'loveable vs wordpress' as a high-impression probe.

| country | test_url | snippet_length | schema_present | time_to_index_days |

|---|---|---|---|---|

| US | /lovable-vs-wordpress | 55 | yes | 2 |

| GB | /lovable-vs-webflow | 48 | no | 4 |

This ai snippet tests site builder matrix is reproducible and should be run after each builder change and after any CDN or caching rule update. Add geo fields to measure regional index differences.

Feature scoring template (scorecard you can run in 30–90 minutes)

Run a 30–90 minute audit using a binary and weighted scorecard. Score core capabilities: editable JSON-LD (0/1/2), lead answer field (0/1/2), programmatic publish API (0/1/2), URL control (0/1/2), sitemap/robots editing (0/1/2), and performance (0/1/2). Weight schema and lead-answer fields higher for AI answer goals.

| Feature | Score (0-2) | Weight | Weighted |

|---|---|---|---|

| Editable JSON-LD | 2 | 3 | 6 |

| Lead answer field | 1 | 3 | 3 |

| Programmatic publish API | 2 | 2 | 4 |

Use the scorecard to evaluate and compare platforms quickly and produce a single weighted score you can track over time.

Example results: sample scorecard for Lovable, WordPress, Webflow

Below is a compact example you can copy. These sample values illustrate where builders typically differ: WordPress often wins on editable schema via plugins; Webflow gives flexible URLs and cleaner HTML but may require custom scripts; Lovable (lovableseo.ai context) aims for structured templates and programmatic publishing.

| Platform | Schema edit | Lead answer | Programmatic publish | URL control | Total (weighted) |

|---|---|---|---|---|---|

| Lovable | 2 | 2 | 2 | 2 | 18 |

| WordPress | 2 | 1 | 1 | 2 | 14 |

| Webflow | 1 | 1 | 0 | 2 | 11 |

Interpretation: use the scorecard to prioritize fixes, not as an absolute decision. For example, if Lovable scores high on lead answers and schema, prioritize programmatic publishing next.

Recommendations: next steps and how to feed results into an SEOAgent playbook

Run the 30–90 minute scorecard, export results to CSV, and feed them into your SEOAgent playbook as structured inputs: enable/disable schema, set lead-answer field, and schedule re-index probes by locale. Use the reproducible test matrix to validate changes. For queries with high impressions in Search Console (e.g., 'loveable vs wordpress' — 1,417 impressions), run additional geo-specific probes to measure regional variance.

Next steps checklist:

- Run the feature scorecard and record weighted totals.

- Implement schema and lead-answer fixes on a staging page and re-run snippet preview tests.

- Schedule programmatic publishes and monitor time-to-index for each locale.

FAQ

What is checklist? The checklist is a reproducible site audit that tests editable structured data, lead-answer fields, URL control, programmatic publish, performance thresholds, and crawlability to measure a builder's readiness for AI-answer inclusion.

How does checklist work? The checklist provides test cases and a weighted scorecard you can run in 30–90 minutes; results feed into your SEOAgent playbook and a snippet test matrix to validate AI-answer extraction across locales.

Ready to Rank Your Lovable App?

This article was automatically published using LovableSEO. Get your Lovable website ranking on Google with AI-powered SEO content.

Get Started